Admittedly, I have yet to publish any large-scale

cross-linguistic study. Actually, I have not even completed data collection for

such a study yet. Cross-linguistic research on reading is hard. It is, however,

very important, as has been argued in a couple of high-profile publications in

the last years (Blasi et al., 2022; Huettig & Ferreira, 2022; Share, 2021; Siegelman

et al., 2022; Vaid, 2022).

So, despite not having anything to show in terms of a successfully completed

study, I thought I would share my experiences with attempting to conduct cross-linguistic

research, and specifically, recruiting collaborators in very different

languages and cultures. Perhaps this will be useful for my fellow anglocentric

or eurocentric researchers, or perhaps some of the readers of this blog post will

have some ideas or insights about open questions or how I should do things

better in the future.

Cognitive processing underlying reading across languages has

been a focus of my research since my PhD. I’m afraid that I did not

particularly contribute to overcoming the focus, in the published literature, on

English and its close relatives, given that in my thesis, I compared reading in

English and German. Afterwards, although I did some work on statistical

learning and meta-science, I have found myself returning to the topic of reading

across languages, as this topic has always fascinated me. A few years ago, I

got a grant from the German Research Foundation to compare single-word reading

aloud in a handful of European languages. In addition to working on this study,

I am currently hoping to extend this work beyond Europe, and have by now

reached out across a number of countries and continents to collect data in orthographies

that, to date, we know relatively little about (relative to English, at any

rate). For the purpose on this current blog post, I would like to talk about

some of the challenges that I have come across. I don’t want to provide a list

of all of the languages and countries where I have (successfully or unsuccessfully)

approached potential collaborators: I don’t, by any means, want to imply that

the challenges reflect anything bad, but I still prefer not to publicly map any

of the challenges that I have experienced to any specific culture.

In conducting cross-linguistic research, finding collaborators

is the first step. For pragmatic purposes, you need someone to recruit participants

and co-ordinate the data collection. You also need someone who knows the

language in question: Even if you are working with an amazing, high-quality

corpus, you need someone to check your stimuli and remove any items that may be

inappropriate for whatever reason (e.g., years ago, I heard a story about a

non-English native speaker running a study with English-speaking children who

had to get the “pseudoword” C*NT removed from her list of stimuli). You need to

check if your instructions have been translated correctly, and if they even

make sense. And, importantly, involving speakers of the language in question

will allow you to identify aspects of the language that are of interest, but

that may be so different from the features of your own language that you are

not aware that they exist (Schmalz et al., 2024).

So, how does one go about finding collaborators? It is easy if

the language is sufficiently well represented in your research community that

you can approach people at conferences, or email researchers who have already

published studies on your topic of interest in their respective language. However,

the less well-represented the language is, the more difficult it becomes. I don’t

want to pretend to know the best solution, but rather want to summarise the

challenges that I have been facing, and some completely subjective thoughts

about how to approach certain situations. Of course, international researchers

are as diverse as the languages that they speak, and so are the cultures within

which they live and work. Thus, the challenges that I list do not apply across

the board, and other challenges may appear in different contexts. But as far as

my experience goes, these are some considerations that I’ve come across:

1) Cognitive science is not established as a science

everywhere. I study how children learn to read, what makes it challenging

for them to read, and how reading works in adults. If I rattle off this

elevator pitch, everyone, no matter their background, gets some idea of what I’m

doing. However, the study of reading is not considered a science everywhere: In

many places, the topic falls under “humanities”, and the attitude towards

understanding how reading works may differ from our approach, which involves

cognitive theories, computational models, and rigorous empirical testing. This

may lead to some confusion about what it is that I’m doing exactly, and

differences in our ideas about how to do experiments. I don’t have a solution

to this, but have concluded that it is important to gauge in advance to what

extent a collaborator is open towards a cognitive approach. After all, while

there is value in more qualitative approaches, this just isn’t my expertise or

research focus. Nevertheless, it is important to bear in mind that there will always

be some differences in the scientific approach: After all, differences tend to

increase with geographical distance, and unless your proposed collaborator has

spent some time in the same lab as you, they will have different ideas about best

practice in research. Incorporating fresh insights from their side will take

your research to the next level.

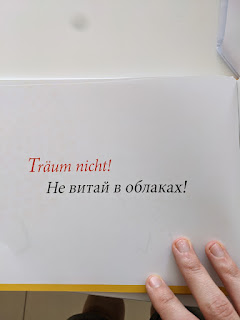

2) There are cultural differences in communication. These

go beyond the stereotypes that one may think of: when I started a large-scale

international collaboration, I found myself wondering if I will need to give

different deadlines to different countries to make sure that everyone will

submit their work by the actual deadline; however, the speed at which the

collaborators completed the task was not at all related to any stereotypes.

Instead, one striking example of cultural differences was in providing

feedback. Some cultures are more straight in providing feedback (e.g., “This is

wrong, you made a mistake” vs. “I’m sure I’m missing something, but I’m wondering

if you have considered the possibility…”). In addition, in some cultures,

people might not want to express any criticism at all, whether it is because

they assume that you know what you are doing, or that it’s your responsibility

to take the consequences for your own mistakes (i.e., that you’re an idiot, but

that’s none of their business), or because they see you as someone whose

authority should not be challenged. In other cultures, people cannot stand

watching someone else doing something that they consider wrong without

providing unwanted advice or a not very diplomatic commentary (I’m guilty of

this myself, and I blame my German half for this).

Then there are more subtle differences that may come across

as rude or inconsiderate, without us even being able to put our finger on them.

The way we address people when writing emails is very variable, with many

personal pet peeves and cultural differences. Some people may automatically put

an email to the spam folder if it addresses them without mentioning their

names; in some cultures, starting an email with “Dear colleague” is considered very

polite. Perhaps you have received emails from international students with unconventional

formulations – I strongly encourage everyone to look past their personal pet

peeves and potential spelling mistakes in their names, and respond to each email,

taking the time and respect that they would show any other colleague. After

all, a student enquiring about the possibility of doing a research thesis with

you may be your international collaborator tomorrow, regardless if you are able

to help them at the time.

3) Bureaucracy. Communicating with cross-linguistic

collaborators has been stimulating, insightful, and fun. I can absolutely not

say this about my local administration. If you have some funding for

cross-linguistic research, you need to consider the bureaucracy that goes into

transferring that money abroad to your collaborators. In my case, my local

university’s administration stalled this process for over two years, because

the relevant department is chronically understaffed. Maybe you are lucky and

things are different at your department. But in any case, it may be worth doing

some research about the relevant procedures in advance, and plan a very, very

generous buffer in your planning. None of my collaborators’ universities have

taken as long as my own institution to process the paperwork, but even here,

processing times have varied, especially if any action was required at the time

when most people in the country were on holidays (obviously, the timing and

duration of the university holidays vary).

4) Language is more than just language. You might be super

enthusiastic about a language that you are about to examine. But the native

speakers of this language are very likely to have a deeper attachment to the

language. For example, maybe their language is a part of a cultural identity

that was historically repressed. Maybe I am stating the obvious (though I call

myself a psycholinguist, my background and education are in psychology, not in

linguistics). Nevertheless, it is important to treat each language with respect

and to be mindful of people’s potential attachment to their language.

An example is a recent experience that I had in a

non-academic context. When it comes to reading in Arabic, I know that there is

some research showing the effect of diglossia: As most people in the Arabic

world speak a dialect that has varying degrees of divergence from Modern

Standard Arabic, they learn to read in a language that is different than what

they learn at home. I mentioned this to an Arabic speaker, who started

explaining to me why his dialect is the closest to Modern Standard Arabic. As

it turns out, there seems to be (at least for some people) prestige associated

with a dialect being closer to MSA, probably also for religious reasons. Being

mindful of the importance that people (yes, researchers are people, too!)

attach to their languages is important. At the same time, to avoid looking like

you have a hidden agenda, it might be worth emphasising that you are neutral

about certain aspects, and have a reason for studying a language that does not

aim to either support or dispute a contentious claim.

5) People might be self-conscious about some things that

you are not aware of. The previous point relates to attitudes that researchers

may have towards their languages, but there may also be other beliefs and

attitudes that may affect your communication with an international

collaborator, or their willingness to collaborate with you. There may be some issues

that have not even crossed your mind but that affect how a potential

collaborator will evaluate you and your research proposal. If you are of

European descent, people might be a priori suspicious about your coming

in and pushing your own research idea. As an example, I once wanted to start a

research project in collaboration with a country where multilingualism is the

norm, and for reasons that have to do with colonialism, most people grow up with

a language of instruction that is different from their home language. Unfortunately,

that collaboration didn’t work out. In retrospect, I’m afraid that the reason

for this is as follows: The way that I presented the project may have come

across as wanting to show that it’s problematic that the people in that country

study in a different language than they speak at home, or that people speak a

different language than what is used at school and university. This was not my

intention, as I genuinely believe that multilingualism brings nothing but

benefits. It simply did not occur to me, at the time, that my project idea may

be construed that way.

6) Political issues. Your research may be completely

unpolitical, but unfortunately, political issues may affect if and how you can

do cross-linguistic studies. For example, my funder no longer allows for its money

to be used in a way that involves exchanging data with researchers based in

Russia. Such sanctions affect collaborations on a formal level, even if all

researchers involved share the same values as you, and might even be keen to

build connections to escape an oppressive regime. If a project involves a

collaboration with researchers in numerous countries, there may also be sanctions

between the respective countries, and some may explicitly prohibit a researcher

from Country X to collaborate with any researcher based in Country Y. If this

is the case, you might end up with the dilemma: Do I exclude the researcher

from Country X, the researcher from Country Y, or do I salami slice the project

and make two separate publications out of it? The restrictions may be formal,

issued by a funding body or university, but they may also be more subtle. Some people

may be very nervous about being in contact with colleagues of a certain

nationality, even in the absence of any official sanctions. From the outside,

we cannot judge the extent to which this nervousness is justified. I see our

role as trusting our collaborators, asking, when necessary, so we understand

the limitations and boundary conditions, showing our moral support, and – above

all – ensuring that we do not put collaborators into unpleasant or even

dangerous situations.

On the personal level, my experience has been exclusively

positive: Even when I’ve been working together with researchers whose home

countries don’t get along at all, the individuals have been very respectful and

friendly towards each other: as always, it is important not to assume that the

actions of a government reflect the attitudes of the people.

The bottom line. All around the world, children start

off with the same broad cognitive structures. The way that these structures

deal with the different scripts and orthographies is a fascinating question,

which we are only beginning to investigate systematically. There are certainly

many reasons why the science of reading is focussed on English and its European

relatives. Are researchers studying reading in English and other European orthographies

reaching out to researchers abroad? My suspicion is that the answer to this

question is “no”. A lack of experience with people from other cultures may be a

major reason. In the past few years, I have worked with people from all

continents aside from Antarctica, which has been a very enriching but humbling experience.

Despite having started off as someone from a bicultural family and having lived

on three different continents, I continue to learn from my international

collaborators, both about how to be a better colleague and a better researcher.

This is why I, despite being far from an expert on cross-cultural

collaboration, have decided to write up my experiences. I hope that my

experience report will encourage cross-cultural collaboration, increased

awareness of things to think about when approaching or communicating with

potential collaborators, and discussions about how to act in a culturally

sensitive and open-minded way.

References

Blasi, D. E., Henrich, J., Adamou, E., Kemmerer, D.,

& Majid, A. (2022). Over-reliance on English hinders cognitive science. Trends in Cognitive Sciences.

Huettig, F., & Ferreira, F. (2022). The

Myth of Normal Reading. Perspectives on

Psychological Science, 17456916221127226.

Schmalz, X., Breuer, J., Haim, M.,

Hildebrandt, A., Knöpfle, P., Leung, A. Y., & Roettger, T. B. (2024). Let’s

talk about language—and its role for replicability. https://osf.io/preprints/metaarxiv/4sb7c

Share, D. L. (2021). Is the science of

reading just the science of reading English? Reading Research Quarterly,

56, S391-S402.

Siegelman, N., Schroeder, S., Acartürk, C.,

Ahn, H.-D., Alexeeva, S., Amenta, S., Bertram, R., Bonandrini, R., Brysbaert,

M., & Chernova, D. (2022). Expanding horizons of cross-linguistic research

on reading: The Multilingual Eye-movement Corpus (MECO). Behavior Research Methods, 1-21.

Vaid, J. (2022). Biscriptality: a neglected

construct in the study of bilingualism. Journal

of Cultural Cognitive Science, 6(2),

135-149.